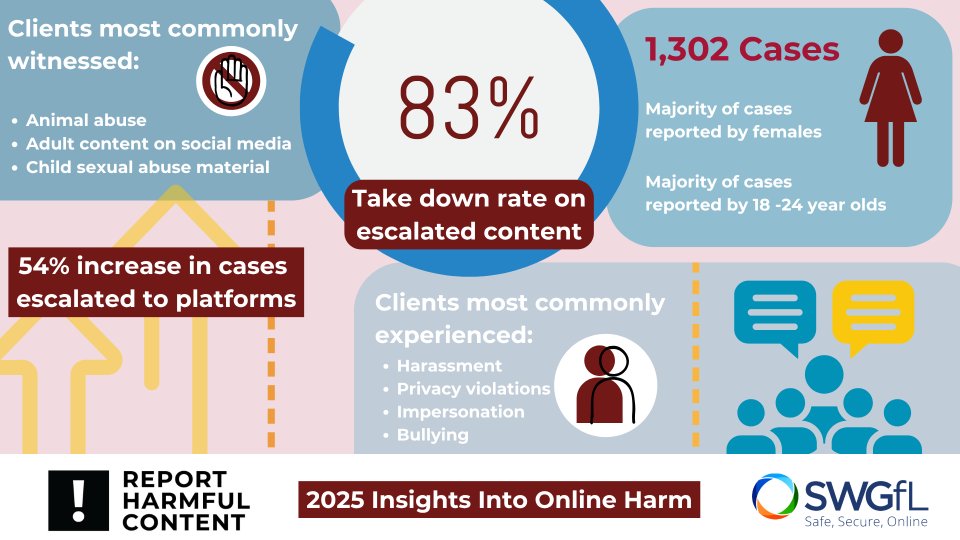

Report Harmful Content (RHC) handled 1,302 cases of individuals reporting instances of online harm in 2025. Harassment was the most reported harm in 2025, accounting for 13% of all cases and a proportion rise of 5% compared to 2024.

Privacy violations accounted for 11%, also increasing year‑on‑year. This included ongoing issues around image misuse, sharing of personal information, and boundary‑crossing behaviours online.

Some categories did drop down slightly. Bullying made up 9% of cases, showing a small decrease, while adult content on social media also sat at 9%. However, reports of adult content rose by 6% overall.

Who is Reporting?

The data has shown that:

- 44% of clients were female

- 41% were male

- 15% identified as other or preferred not to say

Young adults continued to feature most strongly in the data. Those aged 18–24 accounted for 27% of cases and within this group:

- Men were more likely to report adult content, sextortion, and content featuring bestiality

- Women most often experienced harassment, privacy violations, and intimate image abuse

People aged 25–34 made up 24% of cases, with harassment and privacy violations being common across genders. Children and young people aged 13–17 accounted for 17%, and their reports were more likely to involve bullying, privacy breaches, impersonation, and witnessing child sexual abuse material (CSAM).

Witnessed Harm Remains a Major Issue

One of the most interesting patterns in the 2025 data is that nearly half of all reports (45%) involved witnessing harm, rather than experiencing it directly. This included exposure to:

- Animal abuse

- Adult content on social media

- Child sexual abuse material

*Please note these harms are not mutually exclusive

Meanwhile, 42% of clients reported harm they experienced themselves, such as harassment, impersonation, bullying, or privacy violations. A further 20% reported content on behalf of someone else, highlighting how often parents, carers, professionals, and peers step in when harmful content is discovered, especially if it affects children.

These proportions remained high, reinforcing that distressing content continues to circulate widely.

Escalations to Industry Increased

In 2025, 20% of all cases were escalated to platforms, marking a 54% increase from 2024.

Harassment, bullying, adult content, and animal abuse were the most escalated harms. This suggests that while fewer cases were handled overall, a higher proportion required direct platform escalation, highlighting that the content itself still required service intervention.

As we have seen in previous years, not every case could be escalated. Common reasons included clients being based outside the UK, harms falling outside the RHC remit (such as CSAM or sextortion), or platforms themselves being out of scope. In these instances, clients were signposted to the appropriate service. In other situations, clients were supported to report directly, or content had already been removed.

However, when escalations did happen, RHC’s intervention continued to be effective. 83% of content escalated by practitioners was successfully taken down, only slightly below the previous year.

Reduced Levels of Capacity

Report Harmful Content (RHC) handled 1,302 cases of individuals reporting instances of online harm in 2025, which overall was a 32% decrease compared to 2024. While the numbers reflect a positive drop in those who have been affected, it is important to factor in the state of operation for Report Harmful Content across the year.

Throughout 2025, RHC operated at reduced capacity due to a lack of funding. Inevitably this shaped how the level of cases was managed and is likely a significant factor behind the overall drop in numbers. The data reflects that fewer cases have been handled, but despite this, certain forms of online harm remain persistent.

What This All Means

The drop in case numbers shouldn’t be read as a simple sign that online harm is declining. Instead, it reflects a year in which even at a reduced level of operation, cases continued to show persistent areas of harm.

Harassment and privacy violations continue to be prominent, with younger adults showing higher levels of reporting. At the same time, higher escalation rates and strong takedown outcomes show the ongoing value of the service.

The data suggests that demand for support hasn’t gone away and online harm is continuing to affect users across a wide spectrum. The landscape we’re seeing shows that a service like Report Harmful Content is needed but must adapt especially in light of the lack of individual redress in the Online Safety regime The data from last year has prompted us to review our current service and see how we can improve and expand its offering to better support individuals and to ensure that platforms are held to account and individuals are supported

As Ofcom considers alternative dispute resolution models within the Online Safety framework, this data provides timely evidence of where disputes arise in reporting legal but harmful content and why trusted intermediary services remain critical for individual redress.