Ofcom has published its draft codes of practice for social media, gaming, pornography, search, and sharing sites, after being announced as the regulator for online safety under the Online Safety Act, which came into law in October 2023.

The draft codes of practice focus on addressing illegal material online, including child sexual abuse material, grooming content, and fraud.

Protecting Children Online

Formed after Ofcom published new research on the scale of children’s online experiences, the first codes of practice propose how larger and higher-risk online services should protect children from illegal online content.

By default, these services will be expected to ensure that:

- “Children are not presented with lists of suggested friends;”

- “Children do not appear in other users’ lists of suggested friends;”

- “Children are not visible in other users’ connection lists;”

- “Children’s connection lists are not visible to other users;”

- “Accounts outside a child’s connection list cannot send them direct messages;”

- “Children’s location information is not visible to any other users.”

Further proposals also suggest that higher-risk services should use hash-matching technology to help detect and remove child sexual abuse material, and use automated tools to detect URL’s that have been identified to host child sexual abuse material.

Harmful Content Online

As part of a series of core measures to protect children and adults from illegal harm online, Ofcom has proposed that online services should ensure all content and search moderation teams are appropriately trained and will be expected to prepare and apply policies about how they review content.

This will be part of a series of changes expected from online services to strengthen how their users can protect themselves from harmful content, including enforcing platforms to carry out tests on the content recommended to their users by algorithms. Services will also have to ensure that all users can easily report harmful content and block other users.

The proposals also addressed how search services should provide crisis prevention information relating to any queries regarding suicide and suicide methods.

The UK Safer Internet Centre successfully campaigned for amendments to the Online Safety Bill this summer around the enforcement of harmful content and the need for independent appeals processes. The ‘Don’t Steal My Right to Appeal’ campaign raised awareness which resulted in a review period of two years being introduced. Ofcom must publish a full report to the Government on their ongoing enforcement of harmful online content, and review whether there is a need for an independent appeals process.

Fraud and Fake Accounts

Alongside this, the draft codes consider new measures for tackling online fraud, including the implementation of automatic keyword detection for posts involving stolen credentials. Fake accounts will also be addressed, with services that offer verification services having to explain their processes clearly.

What’s Next?

Once finalised, these draft codes are expected to form the basis of online safety regulations in the UK. This is the first of four major consultations that Ofcom will publish over the next 18 months, as they establish their new powers as online safety regulator, in line with the Online Safety Act.

Find out more about Ofcom's first draft code

The First Consultation

The draft code of practice comes as a result of the first consultation addressing how Ofcom will protect users from illegal and harmful content online. Throughout the consultation, Ofcom covered how internet services should approach their new duties to address illegal content on user-to-user and search services, covering subjects including:

- “The causes and impacts of illegal harms;”

- “How services should assess and mitigate the risks of illegal harms;”

- “How services can identify illegal content;”

- “Ofcom’s approach to enforcement.”

Later this year, Ofcom will be releasing further proposals around how adult sites ensure that children cannot access pornographic content online. A further consultation will also be published in Spring 2024, addressing more protections from harmful content for children, including suicide, self-harm, eating disorders and cyberbullying content.

Read more about Ofcom’s first consultation.

David Wright, CEO of SWGfL and Director of the UK Safer Internet Centre said:

We welcome these draft codes of practice from Ofcom. It is an encouraging start towards a new era of safer internet use. Once finalised, these codes will mark a significant step forward for protecting users whenever they go online. This has come after many years of trying to get the Online Safety Act over the line, so it is admirable to see proactive steps taking place to ensure these practices are implemented effectively.”

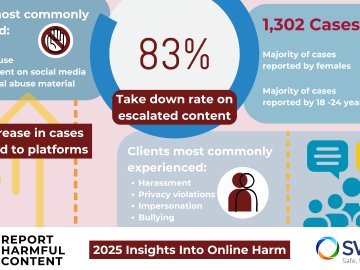

Reporting Harmful Content

If you are concerned about any harmful content online, you can contact our service, Report Harmful Content, which provides advice on the community guidelines and reporting processes for many of the biggest social media platforms.